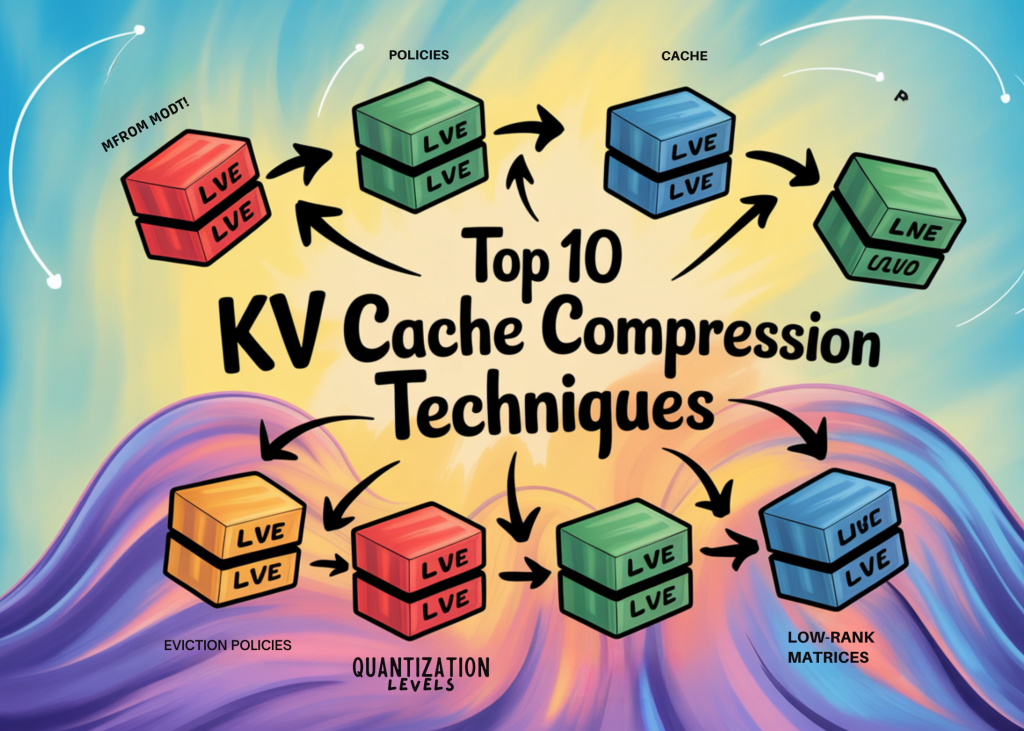

Top 10 KV Cache Compression Techniques for LLM Inference: Reducing Memory Overhead Across Eviction, Quantization, and Low-Rank Methods

As large language models scale to longer context windows and serve more concurrent users, the key-value (KV) cache has emerged as a primary memory bottleneck in production inference systems. For a 30-billion-parameter model with a batch size of 128 and an input length of 1,024 tokens, the resulting KV cache can occupy up to 180 GB of memory. For reference, a 7-billion-parameter model’s parameters consume 14 GB of GPU memory, while the KV cache for the same model can require around 72 GB.

Compressing the KV cache reduces memory pressure, increases batch sizes, and directly improves throughput without retraining the base model. Over the past two years, several distinct compression strategies have emerged from research. This article breaks down the ten most important ones with emphasis on how each works and where it fits in a practical inference pipeline.

Token Eviction with H2O (Heavy Hitter Oracle)

H2O, published at NeurIPS 2023, is one of the foundational token eviction methods. Its core observation is that a small portion of tokens contributes the majority of attention score mass during generation and are called Heavy Hitters (H2). H2O dynamically retains a balance of recent tokens and H2 tokens, keeping a fixed KV cache size across Transformer layers. The selection process is driven by cumulative attention scores averaged across all queries and tokens.

Attention weight distribution follows a power-law which means evicting low-scoring tokens incurs minimal accuracy loss in practice. H2O is a decoding-phase method and does not reduce prefill computation, which remains a limitation for long-context prompts. With 20% heavy hitters, H2O improves throughput over Hugging Face Accelerate by up to 29× on OPT-6.7B and OPT-30B.

StreamingLLM (Attention Sink Retention)

StreamingLLM is designed for scenarios where LLMs must handle very long or infinite input streams. Its strategy is to always maintain the KV states of the first few tokens which serve as attention sinks, and combine them with a sliding window of the most recent tokens up to the available memory budget.

The insight is that initial tokens, regardless of their semantic content, function as structural anchors that receive disproportionately high attention throughout generation. Dropping them causes significant accuracy degradation, while preserving them alongside a recency window stabilizes outputs. StreamingLLM is fast and hardware-friendly but does not use importance scoring, which means it can discard semantically critical middle-context tokens. It is best suited for streaming dialogue applications where recent context dominates.

SnapKV (Observation Window Compression)

SnapKV addresses the prefill stage specifically, targeting long-prompt scenarios. It uses a small observation window at the end of the prompt to predict token importance. The attention scores from queries in this observation window are aggregated to vote for important positions — the heavy hitters — in the prefix.

Unlike H2O, SnapKV employs a pooling layer over the observation window’s attention scores to select clustered important KV positions per attention head, rather than using a flat cumulative importance score across the full sequence. This head-specific selection makes SnapKV more accurate than H2O at the same cache budget. SnapKV has become a widely used baseline for prefill-phase compression and is directly comparable to H2O on benchmarks such as LongBench.

PyramidKV / PyramidInfer (Layer-Wise Pyramidal Allocation)

A key limitation of H2O and SnapKV is that they apply a uniform compression budget across all Transformer layers. PyramidKV addresses this by allocating different cache sizes per layer based on attention pattern structure. The complementary system, PyramidInfer, extends this to the prefill phase itself.

PyramidInfer finds that the number of crucial keys and values that influence future generation decreases layer by layer, and extracts them by measuring consistency in attention weights across recent tokens. By computing fewer keys and values in deeper layers during prefill rather than pruning a pre-computed cache, PyramidInfer reduces memory earlier in the pipeline. Experimental results show PyramidInfer improves throughput by 2.2× compared to Hugging Face Accelerate, with over 54% GPU memory reduction in the KV cache.

The intuition aligns with how information funnels through Transformer depth: early layers need richer context, while deeper layers converge on a smaller set of salient tokens. Assigning compression budgets proportionally to each layer’s actual information density is more efficient than applying a flat budget uniformly.

KV Cache Quantization — KIVI

KIVI, published at ICML 2024, is a plug-and-play 2-bit KV cache quantization algorithm that requires no fine-tuning. It quantizes the key cache per-channel and the value cache per-token.

The asymmetric scheme is motivated by observed distributional differences: keys exhibit larger channel-wise outliers, while values are better represented per-token. With this hardware-friendly design, KIVI enables models including Llama-2, Falcon, and Mistral to maintain comparable generation quality while reducing combined peak memory, model weights and KV cache, by 2.6×. This enables up to 4× larger batch sizes and increases throughput by 2.35× to 3.47× on real inference workloads. The 2.6× figure covers both model weights and KV cache together: at 2-bit precision the KV cache reduction is more aggressive, and it is this reduction that drives the batch size scaling.

KVQuant (Calibrated Mixed-Precision Quantization)

While KIVI applies a fixed asymmetric scheme, KVQuant takes a calibrated, multi-component approach to low-bit KV cache quantization. It combines per-channel key quantization, pre-RoPE key quantization (which avoids quantizing keys after positional embeddings have distorted the distribution), sensitivity-weighted non-uniform quantization that defines quantization levels from calibration data rather than fixed grids, and a dense-and-sparse decomposition that handles extreme outlier values separately from the bulk distribution.

This combination allows KVQuant to push quantization to very low bit widths including sub-4-bit with better accuracy than fixed-precision schemes, targeting deployments that need to support extremely long contexts (the paper evaluates up to 10 million context length). For production systems with stable workloads, the calibration cost is amortized across inference runs.

TurboQuant (Near-Optimal Online KV Cache Quantization)

TurboQuant is Google Research’s latest contribution to this space, accepted at ICLR 2026. It targets a known weakness in all prior quantization methods: MSE-optimal scalar quantizers introduce systematic bias in inner product estimation, which compounds across attention computations. TurboQuant addresses this through a two-stage pipeline.

The first stage, PolarQuant (AISTATS 2026), applies a random orthogonal rotation to each key and value vector before quantization. This rotation redistributes variance uniformly across all coordinates without changing the mathematical content so that each coordinate can be quantized accurately with a simple analytically computed scalar quantizer. No training or calibration is needed. The second stage applies a 1-bit Quantized Johnson-Lindenstrauss (QJL) correction to the quantization residual, which produces an unbiased inner product estimator. Together, the two stages achieve at least 6× memory reduction and up to 8× faster attention computation on NVIDIA H100 GPUs at 3-bit precision, operating within a factor of approximately 2.7 of the information-theoretic limit. Because TurboQuant uses random matrices rather than learned ones, it applies to any model at inference time with no offline preparation.

Multi-Query Attention (MQA) and Grouped-Query Attention (GQA)

MQA and GQA are architectural modifications that reduce the KV cache by design rather than compressing an existing one. In MQA, all query heads share a single key and value head, dramatically reducing cache size. GQA groups multiple query heads to share a smaller set of key-value heads, offering a middle ground between full multi-head attention and MQA. Both require either training from scratch or fine-tuning; without proper training, applying them to pre-trained models typically results in degraded performance.

GQA has since become the de facto standard in modern open-weight LLMs. In Llama 2, only the 70B model used GQA — the 7B and 13B variants used standard multi-head attention. Llama 3 extended GQA across both the 8B and 70B sizes. Mistral applied GQA from its initial 7B release in September 2023. For practitioners selecting or deploying new model families, GQA is now a baseline expectation rather than an optional optimization.

Multi-Head Latent Attention (MLA) — DeepSeek

MLA is DeepSeek’s architectural solution to KV cache memory, first introduced in DeepSeek-V2 (May 2024) and carried forward in DeepSeek-V3 and DeepSeek-R1. It is an attention mechanism equipped with low-rank key-value joint compression. Rather than storing full-dimensional key and value tensors per token, MLA projects them into a compressed latent vector during inference, storing the latent representation instead.

The results are the most dramatic of any technique on this list. Compared to DeepSeek’s prior 67B dense model, DeepSeek-V2 with MLA reduces the KV cache by 93.3% while achieving superior performance compared to standard multi-head attention. This is not a marginal improvement — it fundamentally changes the memory economics of serving large models, enabling significantly longer context windows and larger batch sizes on the same hardware. Research has also shown that MLA consistently offers higher expressive power than GQA under the same KV cache budget, providing a theoretical basis for the empirical gains. Among architectural approaches, MLA is currently the most validated at scale in open-weight models.

Low-Rank KV Cache Compression (Palu / LoRC)

Low-rank compression targets the hidden dimension of KV tensors rather than the sequence length or bit width. Palu is a post-training KV cache compression framework that reduces cache size through low-rank projection of key and value weight matrices. It proposes a medium-grained, group-head low-rank decomposition that balances accuracy and reconstruction overhead, and uses an efficient rank search algorithm based on Fisher information to automatically assign larger ranks to more sensitive weight matrices and smaller ranks to less critical ones.

Related methods in this family include LoRC, SVDq, CSKV, and ReCalKV, all of which exploit the observation that key and value matrices across attention heads exhibit significant low-rank structure, particularly for longer contexts. Low-rank methods are orthogonal to both quantization and token eviction and can be stacked with either for compounded compression. This family remains relatively underexplored compared to eviction-based methods, making it an active area of research.

Key Takeaways:

KV cache growth is proportional to both sequence length and batch size, making compression essential for high-throughput serving.

Token eviction (H2O, StreamingLLM, SnapKV) is training-free and hardware-compatible but discards tokens permanently; SnapKV selects clustered important KV positions per head via pooled attention scores, not flat cumulative scores.

Quantization (KIVI, KVQuant, TurboQuant) reduces memory without removing tokens. KIVI achieves 2.6× combined peak memory reduction (model weights + KV cache) at 2-bit precision; TurboQuant achieves 6× memory reduction at 3-bit precision with no calibration, operating near the information-theoretic limit.

Low-rank methods (Palu, LoRC, MLA) target hidden dimension redundancy and remain underexplored relative to token eviction.

Architectural solutions (GQA, MLA) must be incorporated at training time. In Llama 2, only the 70B model used GQA; Llama 3 extended it across all sizes. MLA achieves a 93.3% KV cache reduction in DeepSeek-V2.

The 2026 research frontier is moving toward latent-space compaction (Attention Matching, 50× compaction) and reasoning-aware compression (TriAttention, 10.7× memory reduction on AIME25 at matched accuracy).

Also, feel free to follow us on Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Need to partner with us for promoting your GitHub Repo OR Hugging Face Page OR Product Release OR Webinar etc.? Connect with us

Michal Sutter is a data science professional with a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.